Write your Kubernetes Infrastructure as Go code - Manage AWS services

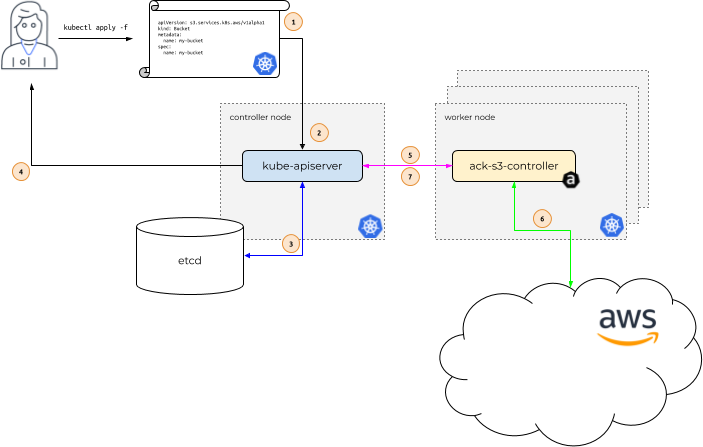

AWS Controllers for Kubernetes (also known as ACK) are built around the Kubernetes extension concepts of Custom Resource and Custom Resource Definitions. You can use ACK to define and use AWS services directly from Kubernetes. This helps you take advantage of managed AWS services for your Kubernetes applications without needing to define resources outside of the cluster.

Say you need to use a AWS S3 Bucket in your application that's deployed to Kubernetes. Instead of using AWS console, AWS CLI, AWS CloudFormation etc., you can define the AWS S3 Bucket in a YAML manifest file and deploy it using familiar tools such as kubectl. The end goal is to allow users (Software Engineers, DevOps engineers, operators etc.) to use the same interface (Kubernetes API in this case) to describe and manage AWS services along with native Kubernetes resources such as Deployment, Service etc.

Here is a diagram from the ACK documentation, that provides a high level overview:

What's covered?

ACK supports many AWS services, including Amazon DynamoDB. One of the topics that this chapter covers is how to use ACK on Amazon EKS for managing DynamoDB. But, just creating a DynamoDB table isn't going to be all that interesting!

In addition to it, you will also work with and deploy a client application - this is a trimmed down version of the URL shortener app covered in a blog post. While the first half of the chapter will involve manual steps to help you understand the mechanics and get started, in the second half, we will switch to cdk8s and achieve the same goals using nothing but Go code.

cdk8s? what, why? Because, Infrastructure IS code

cdk8s (Cloud Development Kit for Kubernetes) is an open-source framework (part of CNCF) that allows you to define your Kubernetes applications using regular programming languages (instead of yaml). We will continue on the same path i.e. push yaml to the background and use the Go programming language to define the core infrastructure (that happens to be DynamoDB in this example, but could be so much more) as well as the application components (Kubernetes Deployment, Service etc.).

This is made possible due to the following cdk8s features:

cdk8ssupport for Kubernetes Custom Resource definitions that lets us magically import CRD as APIs.- cdk8s-plus library that helps reduce/eliminate a lot of boilerplate code while working with Kubernetes resources in our Go code (or any other language for that matter)

Before you dive in, please ensure you complete the prerequisites in order to work through the tutorial.

The code is available on GitHub

Prerequisites

To follow along step-by-step, in addition to an AWS account, you will need to have AWS CLI, cdk8s CLI, kubectl, helm and the Go programming language installed.

There are a variety of ways in which you can create an Amazon EKS cluster. I prefer using eksctl CLI because of the convenience it offers!

Ok let's get started. The first thing we need to do is...

Set up the DynamoDB controller

Most of the below steps are adapted from the

ACKdocumentation - Install an ACK Controller

Install it using Helm:

export SERVICE=dynamodb

export RELEASE_VERSION=`curl -sL https://api.github.com/repos/aws-controllers-k8s/$SERVICE-controller/releases/latest | grep '"tag_name":' | cut -d'"' -f4`

export ACK_SYSTEM_NAMESPACE=ack-system

# you can change the region if required

export AWS_REGION=us-east-1

aws ecr-public get-login-password --region us-east-1 | helm registry login --username AWS --password-stdin public.ecr.aws

helm install --create-namespace -n $ACK_SYSTEM_NAMESPACE ack-$SERVICE-controller \

oci://public.ecr.aws/aws-controllers-k8s/$SERVICE-chart --version=$RELEASE_VERSION --set=aws.region=$AWS_REGION

To confirm, run:

kubectl get crd

# output (multiple CRDs)

tables.dynamodb.services.k8s.aws

fieldexports.services.k8s.aws

globaltables.dynamodb.services.k8s.aws

# etc....

Since the DynamoDB controller has to interact with AWS Services (make API calls), we need to configure IAM Roles for Service Accounts (also known as IRSA).

Refer to Configure IAM Permissions for details

IRSA configuration

First, create an OIDC identity provider for your cluster.

export EKS_CLUSTER_NAME=<name of your EKS cluster>

export AWS_REGION=<cluster region>

eksctl utils associate-iam-oidc-provider --cluster $EKS_CLUSTER_NAME --region $AWS_REGION --approve

The goal is to create an IAM role and attach appropriate permissions via policies. We can then create a Kubernetes Service Account and attach the IAM role to it. Thus, the controller Pod will be able to make AWS API calls. Note that we are using providing all DynamoDB permissions to our control via the arn:aws:iam::aws:policy/AmazonDynamoDBFullAccess policy.

Thanks to eksctl, this can be done with a single line!

export SERVICE=dynamodb

export ACK_K8S_SERVICE_ACCOUNT_NAME=ack-$SERVICE-controller

# recommend using the same name

export ACK_SYSTEM_NAMESPACE=ack-system

export EKS_CLUSTER_NAME=<enter EKS cluster name>

export POLICY_ARN=arn:aws:iam::aws:policy/AmazonDynamoDBFullAccess

# IAM role has a format - do not change it. you can't use any arbitrary name

export IAM_ROLE_NAME=ack-$SERVICE-controller-role

eksctl create iamserviceaccount \

--name $ACK_K8S_SERVICE_ACCOUNT_NAME \

--namespace $ACK_SYSTEM_NAMESPACE \

--cluster $EKS_CLUSTER_NAME \

--role-name $IAM_ROLE_NAME \

--attach-policy-arn $POLICY_ARN \

--approve \

--override-existing-serviceaccounts

The policy is per https://github.com/aws-controllers-k8s/dynamodb-controller/blob/main/config/iam/recommended-policy-arn

To confirm, you can check whether the IAM role was created and also introspect the Kubernetes service account

aws iam get-role --role-name=$IAM_ROLE_NAME --query Role.Arn --output text

kubectl describe serviceaccount/$ACK_K8S_SERVICE_ACCOUNT_NAME -n $ACK_SYSTEM_NAMESPACE

# you will see similar output

Name: ack-dynamodb-controller

Namespace: ack-system

Labels: app.kubernetes.io/instance=ack-dynamodb-controller

app.kubernetes.io/managed-by=eksctl

app.kubernetes.io/name=dynamodb-chart

app.kubernetes.io/version=v0.1.3

helm.sh/chart=dynamodb-chart-v0.1.3

k8s-app=dynamodb-chart

Annotations: eks.amazonaws.com/role-arn: arn:aws:iam::<your AWS account ID>:role/ack-dynamodb-controller-role

meta.helm.sh/release-name: ack-dynamodb-controller

meta.helm.sh/release-namespace: ack-system

Image pull secrets: <none>

Mountable secrets: ack-dynamodb-controller-token-bbzxv

Tokens: ack-dynamodb-controller-token-bbzxv

Events: <none>

For IRSA to take effect, you need to restart the ACK Deployment:

# Note the deployment name for ACK service controller from following command

kubectl get deployments -n $ACK_SYSTEM_NAMESPACE

kubectl -n $ACK_SYSTEM_NAMESPACE rollout restart deployment ack-dynamodb-controller-dynamodb-chart

Confirm that the Deployment has restarted (currently Running) and the IRSA is properly configured:

kubectl get pods -n $ACK_SYSTEM_NAMESPACE

kubectl describe pod -n $ACK_SYSTEM_NAMESPACE ack-dynamodb-controller-dynamodb-chart-7dc99456c6-6shrm | grep "^\s*AWS_"

# The output should contain following two lines:

AWS_ROLE_ARN=arn:aws:iam::<AWS_ACCOUNT_ID>:role/<IAM_ROLE_NAME>

AWS_WEB_IDENTITY_TOKEN_FILE=/var/run/secrets/eks.amazonaws.com/serviceaccount/token

Now that we're done with the configuration ...

We can move on to the fun parts!

Start by creating the DynamoDB table

Here is what the manifest looks like:

apiVersion: dynamodb.services.k8s.aws/v1alpha1

kind: Table

metadata:

name: dynamodb-urls-ack

spec:

tableName: urls

attributeDefinitions:

- attributeName: shortcode

attributeType: S

billingMode: PAY_PER_REQUEST

keySchema:

- attributeName: email

keyType: HASH

Clone the project, change to the correct directory and apply the manifest:

git clone https://github.com/abhirockzz/dynamodb-ack-cdk8s

cd dynamodb-ack-cdk8s

# create table

kubectl apply -f manual/dynamodb-ack.yaml

You can check the DynamoDB table in the AWS console, or use the AWS CLI (aws dynamodb list-tables) to confirm.

Our table is ready, now we can deploy our URL shortener application.

But, before that, we need to create a Docker image and push it to a private repository in Amazon Elastic Container Registry (ECR).

Create private repository in Amazon ECR

Login to ECR:

aws ecr get-login-password --region <enter region> | docker login --username AWS --password-stdin <enter aws_account_id>.dkr.ecr.<enter region>.amazonaws.com

Create repository:

aws ecr create-repository \

--repository-name dynamodb-app \

--region <enter AWS region>

Build image and push to ECR

# if you're on Mac M1

#export DOCKER_DEFAULT_PLATFORM=linux/amd64

docker build -t dynamodb-app .

docker tag dynamodb-app:latest <enter aws_account_id>.dkr.ecr.<enter region>.amazonaws.com/dynamodb-app:latest

docker push <enter aws_account_id>.dkr.ecr.<enter region>.amazonaws.com/dynamodb-app:latest

Just like the controller, our application also needs IRSA to be able to execute GetItem and PutItem API calls on DynamoDB.

Let's create another IRSA for the application

# you can change the policy name. make sure yo use the same name in subsequent commands

aws iam create-policy --policy-name dynamodb-irsa-policy --policy-document file://manual/policy.json

eksctl create iamserviceaccount --name eks-dynamodb-app-sa --namespace default --cluster <enter EKS cluster name> --attach-policy-arn arn:aws:iam::<enter AWS account ID>:policy/dynamodb-irsa-policy --approve

kubectl describe serviceaccount/eks-dynamodb-app-sa

# output

Name: eks-dynamodb-app-sa

Namespace: default

Labels: app.kubernetes.io/managed-by=eksctl

Annotations: eks.amazonaws.com/role-arn: arn:aws:iam::<AWS account ID>:role/eksctl-eks-cluster-addon-iamserviceaccount-d-Role1-2KTGZO1GJRN

Image pull secrets: <none>

Mountable secrets: eks-dynamodb-app-sa-token-5fcvf

Tokens: eks-dynamodb-app-sa-token-5fcvf

Events: <none>

Finally, we can deploy our application!

In the manual/app.yaml file, make sure you replace the following attributes as per your environment:

- Service account name - the above example used eks-dynamodb-app-sa

- Docker image

- Container environment variables for AWS region (e.g. us-east-1) and table name (this will be urls since that's the name we used)

kubectl apply -f app.yaml

# output

deployment.apps/dynamodb-app configured

service/dynamodb-app-service created

This will create a Deployment as well as Service to access the application.

Since the Service type is

LoadBalancer, an appropriate AWS Load Balancer will be provisioned to allow for external access.

Check Pod and Service:

kubectl get pods

kubectl get service/dynamodb-app-service

# to get the load balancer IP

APP_URL=$(kubectl get service/dynamodb-app-service -o jsonpath="{.status.loadBalancer.ingress[0].hostname}")

echo $APP_URL

# output example

a0042d5b5b0ad40abba9c6c42e6342a2-879424587.us-east-1.elb.amazonaws.com

You have deployed the application and know the endpoint over which it's publicly accessible.

You can try out the URL shortener service

curl -i -X POST -d 'https://abhirockzz.github.io/' $APP_URL:9090/

# output

HTTP/1.1 200 OK

Date: Thu, 21 Jul 2022 11:03:40 GMT

Content-Length: 25

Content-Type: text/plain; charset=utf-8

{"ShortCode":"ae1e31a6"}

If you get a

Could not resolve hosterror while accessing the LB URL, wait for a minute or so and re-try

You should receive a JSON response with a short code. Enter the following in your browser http://<enter APP_URL>:9090/<shortcode> e.g. http://a0042d5b5b0ad40abba9c6c42e6342a2-879424587.us-east-1.elb.amazonaws.com:9090/ae1e31a6 - you will be re-directed to the original URL.

You can also use curl:

# example

curl -i http://a0042d5b5b0ad40abba9c6c42e6342a2-879424587.us-east-1.elb.amazonaws.com:9090/ae1e31a6

# output

HTTP/1.1 302 Found

Location: https://abhirockzz.github.io/

Date: Thu, 21 Jul 2022 11:07:58 GMT

Content-Length: 0

Enough of YAML I guess! Like I promised earlier, the second half will demonstrate how to achieve the same using cdk8s and Go.

Kubernetes infrastructure as Go code with cdk8s

Assuming you've already cloned the project (as per above instructions), change to a different directory:

cd dynamodb-cdk8s

This is a pre-created cdk8s project that you can use. The entire logic is present in main.go file. We will first try it out and confirm that it works the same way. After that we will dive into the nitty gritty of the code.

Delete the previously created DynamoDB table and along with the Deployment (and Service) as well:

# you can also delete the table directly from AWS console

aws dynamodb delete-table --table-name urls

# this will delete Deployment and Service (as well as AWS Load Balancer)

kubectl delete -f manual/app.yaml

Use cdk8s synth to generate the manifest for DynamoDB table and the application. We can then apply it using kubectl

See the difference? Earlier, we defined the

DynamoDBtable,Deployment(andService) manifests manually.cdk8sdoes not remove YAML altogether, but it provides a way for us to leverage regular programming languages (Goin this case) to define the components of our solution.

export TABLE_NAME=urls

export SERVICE_ACCOUNT=eks-dynamodb-app-sa

export DOCKER_IMAGE=<enter ECR repo that you created earlier>

cdk8s synth

ls -lrt dist/

#output

0000-dynamodb.k8s.yaml

0001-deployment.k8s.yaml

You will see two different manifests being generated by cdk8s since we defined two separate cdk8s.Charts in the code - more on this soon.

We can deploy them one by one:

kubectl apply -f dist/0000-dynamodb.k8s.yaml

#output

table.dynamodb.services.k8s.aws/dynamodb-dynamodb-ack-cdk8s-table-c88d874d created

configmap/export-dynamodb-urls-info created

fieldexport.services.k8s.aws/export-dynamodb-tablename created

fieldexport.services.k8s.aws/export-dynamodb-region created

As always, you can check the DynamoDB table either in console or AWS CLI - aws dynamodb describe-table --table-name urls. Looking at the output, the DynamoDB table part seems familiar...

But what's fieldexport.services.k8s.aws??

... And why do we need a ConfigMap? I will give you the gist here.

In the previous iteration, we hard-coded the table name and region in manual/app.yaml. While this works for this sample app, it is not scalable and might not even work for a few resources in case the metadata (like name etc.) is randomly generated. That's why there is this concept of a FieldExport that can "export any spec or status field from an ACK resource into a Kubernetes ConfigMap or Secret"

You can read up on the details in the ACK documentation along with some examples. What you will see here is how to define a FieldExport and ConfigMap along with the Deployment which needs to be configured to accept its environment variables from the ConfigMap - all this in code, using Go (more on this during code walk-through).

Check the FieldExport and ConfigMap:

kubectl get fieldexport

#output

NAME AGE

export-dynamodb-region 19s

export-dynamodb-tablename 19s

kubectl get configmap/export-dynamodb-urls-info -o yaml

We started out with a blank ConfigMap (as per cdk8s logic), but ACK magically populated it with the attributes from the Table custom resource.

apiVersion: v1

data:

default.export-dynamodb-region: us-east-1

default.export-dynamodb-tablename: urls

immutable: false

kind: ConfigMap

#....omitted

We can now use the second manifest - no surprises here. Just like in the previous iteration, all it contains is the application Deployment and the Service. Check Pod and Service:

kubectl apply -f dist/0001-deployment.k8s.yaml

#output

deployment.apps/dynamodb-app created

service/dynamodb-app-service configured

kubectl get pods

kubectl get svc

The entire setup is ready, just like it was earlier and you can test it the same way. I will not repeat the steps here. Instead, I will move to something more interesting.

cdk8s code walk-through

The logic is divided into two Charts. I will only focus on key sections of the code and rest will be ommitted for brevity.

DynamoDB and configuration

We start by defining the DynamoDB table (name it urls) as well as the ConfigMap (note that it does not have any data at this point):

func NewDynamoDBChart(scope constructs.Construct, id string, props *MyChartProps) cdk8s.Chart {

//...

table := ddbcrd.NewTable(chart, jsii.String("dynamodb-ack-cdk8s-table"), &ddbcrd.TableProps{

Spec: &ddbcrd.TableSpec{

AttributeDefinitions: &[]*ddbcrd.TableSpecAttributeDefinitions{

{AttributeName: jsii.String(primaryKeyName), AttributeType: jsii.String("S")}},

BillingMode: jsii.String(billingMode),

TableName: jsii.String(tableName),

KeySchema: &[]*ddbcrd.TableSpecKeySchema{

{AttributeName: jsii.String(primaryKeyName),

KeyType: jsii.String(hashKeyType)}}}})

//...

cfgMap = cdk8splus22.NewConfigMap(chart, jsii.String("config-map"),

&cdk8splus22.ConfigMapProps{

Metadata: &cdk8s.ApiObjectMetadata{

Name: jsii.String(configMapName)}})

Then we move on to the FieldExports - one each for the AWS region and the table name. As soon as these are created, the ConfigMap is populated with the required data as per from and to configuration in the FieldExport.

//...

fieldExportForTable = servicesk8saws.NewFieldExport(chart, jsii.String("fexp-table"), &servicesk8saws.FieldExportProps{

Metadata: &cdk8s.ApiObjectMetadata{Name: jsii.String(fieldExportNameForTable)},

Spec: &servicesk8saws.FieldExportSpec{

From: &servicesk8saws.FieldExportSpecFrom{Path: jsii.String(".spec.tableName"),

Resource: &servicesk8saws.FieldExportSpecFromResource{

Group: jsii.String("dynamodb.services.k8s.aws"),

Kind: jsii.String("Table"),

Name: table.Name()}},

To: &servicesk8saws.FieldExportSpecTo{

Name: cfgMap.Name(),

Kind: servicesk8saws.FieldExportSpecToKind_CONFIGMAP}}})

fieldExportForRegion = servicesk8saws.NewFieldExport(chart, jsii.String("fexp-region"), &servicesk8saws.FieldExportProps{

Metadata: &cdk8s.ApiObjectMetadata{Name: jsii.String(fieldExportNameForRegion)},

Spec: &servicesk8saws.FieldExportSpec{

From: &servicesk8saws.FieldExportSpecFrom{

Path: jsii.String(".status.ackResourceMetadata.region"),

Resource: &servicesk8saws.FieldExportSpecFromResource{

Group: jsii.String("dynamodb.services.k8s.aws"),

Kind: jsii.String("Table"),

Name: table.Name()}},

To: &servicesk8saws.FieldExportSpecTo{

Name: cfgMap.Name(),

Kind: servicesk8saws.FieldExportSpecToKind_CONFIGMAP}}})

//...

The application chart

The core of our application is the Deployment itself:

func NewDeploymentChart(scope constructs.Construct, id string, props *MyChartProps) cdk8s.Chart {

//...

dep := cdk8splus22.NewDeployment(chart, jsii.String("dynamodb-app-deployment"), &cdk8splus22.DeploymentProps{

Metadata: &cdk8s.ApiObjectMetadata{

Name: jsii.String("dynamodb-app")},

ServiceAccount: cdk8splus22.ServiceAccount_FromServiceAccountName(

chart,

jsii.String("aws-irsa"),

jsii.String(serviceAccountName))})

The next important part is the container and it's configuration. We specify the ECR image repository along with the environment variables - they reference the ConfigMap we defined in the previous chart (everything is connected!):

//...

container := dep.AddContainer(

&cdk8splus22.ContainerProps{

Name: jsii.String("dynamodb-app-container"),

Image: jsii.String(image),

Port: jsii.Number(appPort)})

container.Env().AddVariable(jsii.String("TABLE_NAME"), cdk8splus22.EnvValue_FromConfigMap(

cfgMap, jsii.String("default."+*fieldExportForTable.Name()),

&cdk8splus22.EnvValueFromConfigMapOptions{Optional: jsii.Bool(false)}))

container.Env().AddVariable(jsii.String("AWS_REGION"), cdk8splus22.EnvValue_FromConfigMap(

cfgMap, jsii.String("default."+*fieldExportForRegion.Name()),

&cdk8splus22.EnvValueFromConfigMapOptions{Optional: jsii.Bool(false)}))

Finally, we define the Service (type LoadBalancer) which enables external application access and tie it all together in the main function:

//...

dep.ExposeViaService(

&cdk8splus22.DeploymentExposeViaServiceOptions{

Name: jsii.String("dynamodb-app-service"),

ServiceType: cdk8splus22.ServiceType_LOAD_BALANCER,

Ports: &[]*cdk8splus22.ServicePort{

{Protocol: cdk8splus22.Protocol_TCP,

Port: jsii.Number(lbPort),

TargetPort: jsii.Number(appPort)}}})

//...

func main() {

app := cdk8s.NewApp(nil)

dynamodDB := NewDynamoDBChart(app, "dynamodb", nil)

deployment := NewDeploymentChart(app, "deployment", nil)

deployment.AddDependency(dynamodDB)

app.Synth()

}

Don't forget to delete resources..

# delete DynamoDB table, Deployment, Service etc.

kubectl delete -f dist/

# to uninstall the ACK controller

export SERVICE=dynamodb

helm uninstall -n $ACK_SYSTEM_NAMESPACE ack-$SERVICE-controller

# delete the EKS cluster. if created via eksctl:

eksctl delete cluster --name <enter name of eks cluster>

Wrap up..

AWS Controllers for Kubernetes help bridge the gap between traditional Kubernetes resources and AWS services by allowing you to manage both from a single control plane. You saw how to do this in the context of DynamoDB and a URL shortener application (deployed to Kubernetes). I encourage you to try out other AWS services that ACK supports - here is a complete list.

The approach presented here will work well if just want to use cdk8s. However, depending on your requirements, there is another way this can done by bringing in AWS CDK into the picture.